What is quantum computing? That’s a question still being asked by much of the world’s C-suite. But here at CC our multidisciplinary teams of physicists, engineers and designers are already helping to plot a credible pathway for a future powered by quantum technology. In this article, I want to unpack one particular aspect of its formidable potential – the ability of quantum computers to transform business workflows. And to do that, I’m going to take you into the quantum computing cloud.

Let me begin with an imagined but wholly realistic future scenario in the pharmaceutical industry. I’ve conceived it to put the potential of cloud-based quantum services into perspective. Picture if you will a company called Futuristic Pharmaceuticals Inc. An employee named Alex uploads omics data into a cloud server hosted by Acme Quantum. Overnight, proprietary macros call on a suite of quantum algorithms under the hood to generate a personalised treatment plan for Patient X, complete with a selection of drug variants.

Alex’s colleagues synthesise and test the recommendations over the coming days to establish the best treatment. All files are archived automatically to ensure regulatory compliance – but with favourable outcomes now almost guaranteed, Alex can move on to more challenging tasks, satisfied by a job well done.

Commercial applications of quantum computing

So, what’s standing in the way of these transformative quantum efficiency gains arriving? To realise our vision for Alex and Futuristic Pharmaceuticals, we need more than just better quantum hardware. Quantum computers are complex and require specialist knowledge to interact with. For most non-specialists, it’s even difficult to comprehend why they are useful – let alone understand how to use them effectively.

Even where use cases are clear, the infrastructure to securely transfer data and distribute tasks effectively across quantum and classical HPC (high performance computing) services does not exist. In today’s digital landscape, cloud services provide a flexible, secure, convenient means for access. Successful quantum cloud systems will help drive growth of this powerful, emerging technology.

The impossible is about to become possible.

The good news is that the cloud-based quantum computing ecosystem is growing, with new quantum software tools and cloud services such as Amazon Braket and Microsoft Azure Quantum coming on stream. But these cloud platforms are mostly designed for quantum algorithm specialists to conduct research, explore new use cases and test for potential quantum advantage.

Meanwhile, educational tools like IBM Quantum Experience allow application specialists to learn how quantum computers work. The big picture here is the fact that a large gap remains between the pre-production research platforms currently available and mature quantum cloud services ready for integration into commercial workflows.

Quantum cloud ease of use is crucial

The main point I want to impress on you is the bit that will be critical for industry uptake… we must make cloud based quantum services easier to use. Yes, having the most powerful quantum computer on the market will be a differentiator, but will not guarantee success. Take smartphones. When it was introduced in 2007, Nokia’s N95 had 20 times the download speed and a significantly better feature set than the first iPhone. But Apple delivered a product people preferred to use – and in the long term, the superior user experience won.

So here’s the thing. To make quantum computing commercially successful, we need to make workflows feel good. And to make this happen, we must adhere to the best practices of digital service development. A rigorous commitment to design thinking with the end-user’s goals in mind will pay huge dividends. What can be abstracted away? What features need to be accessible? How is data transferred? How much uptime do we need? Answering such questions will help shape feel-good – and successful – quantum cloud solutions.

Exploring the quantum cloud trade-offs

Good digital service design is facilitated by good service architecture. All of which needs to be designed around user needs. Here, requirements and trade-offs are likely to vary depending on the business sector and use case. That said, let’s add some context and perspective for the here and now by exploring the quantum trade-offs of reliability versus performance.

A quantum computer is fragile and needs regular calibration which will take at least part of it offline. While the rate of runtime errors on a classical computer does not vary with time, it gets progressively worse on quantum computers as system parameters drift.

Calibration brings error rates down to a base level but deciding how often to do this will affect the quality of service experienced by users. It follows that your judgement on how often you want to calibrate will depend on what users need. If they are running many short programs, fewer calibrations are needed as a failure is not as critical. This also means that resources can be utilised at a higher rate, reducing costs. For long-running programs, it is important they run to completion so computers should be calibrated regularly to increase the probability of success.

In a life sciences context, let’s consider a quantum cloud solution optimised either for target identification or for quantum chemistry simulations. For initial sweeps of huge databases for potential drug targets and low accuracy solutions, submissions can be batched. This means runs can be small – but if you want to make a lot of them, the runs need to be cheap. Once promising leads have been identified, simulating drug chemistry to high accuracy will require large complex simulation. A cloud provider should have a good understanding of its users’ needs and adapt its calibration schemes accordingly. Quality of service guarantees will depend on use, as well as partnerships between quantum cloud providers and their customers.

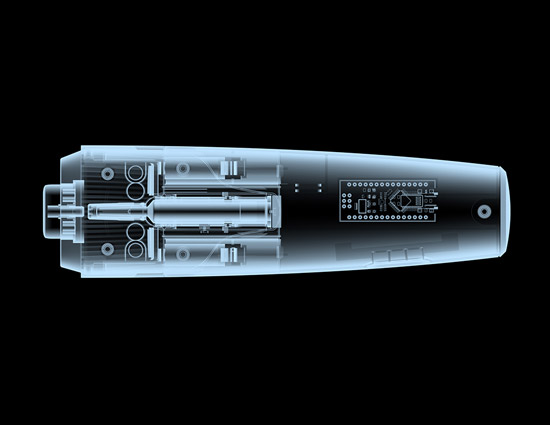

Quantum processing units in a hybrid world

Now let’s turn to heterogeneous computers. QPUs (quantum processing units) will be able to carry out currently unfathomable computations with ease. They’ll do it by using a completely different form of logic than current computational hardware. The flipside of this is that some tasks which are currently easily carried out on classical computers will be hard for QPUs. So to maximise their potential, QPUs will be integrated into a hybrid computational environment that mixes classical and quantum elements.

Seamless management of computation and data flows across heterogenous components will be a key service requirement of the quantum cloud infrastructure. Without automated, easy-to-use tools, wide uptake of the technology will be hampered and lead to an erosion of quantum computing’s commercial value.

Cloud platforms which provide high quality orchestration of heterogenous computational elements will deliver equally high commercial value. They will outdo platforms that have higher individual component performance yet lack simple integration. In the life sciences context, users need to be able to focus on the science rather than worrying about how to get different computational resources to work together coherently.

I’ve focused on a pharma/life sciences example for this article, but the plain fact is that quantum computing will have a massive impact on any number of sectors. Naturally, user needs will vary enormously – as will the requirements of new service platforms. Current system architectures built for research must evolve to serve a new wave of companies integrating quantum cloud systems into their commercial workflows. Allowing people like Alex to flourish professionally. If you’d like to keep the quantum cloud conversation going, please drop me an email – I’d love to hear from you.